For the past decade, AWS Lambda has been defined by its simplicity and its constraints. While the serverless model excels at scaling and operational ease, it historically required architects to manage specific trade-offs: persistent infrastructure needs were often offloaded to ECS or EC2, and complex state management was handled via Step Functions.

At re:Invent 2025, AWS introduced two major features that fundamentally change how we build on the platform: Lambda Managed Instances and Lambda Durable Functions. These updates address the “stateless and ephemeral” bottlenecks that have long influenced serverless design patterns.

If you’re evaluating how these new capabilities fit into your roadmap, this guide breaks down the details.

TL;DR: Executive Summary

- Lambda Managed Instances: Optimized for high-volume, predictable traffic. It runs in your account on EC2 Nitro, supports multi-concurrency, and is compatible with EC2 Savings Plans.

- Lambda Durable Functions: Optimized for multi-step workflows (like AI agents or order processing). It uses a checkpoint-and-replay mechanism to stay active for up to a year without charging for idle time.

- A hands-on one-click deployment demo architecture is included to get you up and running with Lambda Durable Functions in 5 minutes.

Table of Contents

Defining Lambda: The Pre-2026 Environment

To understand the impact of these changes, we have to look at the original Lambda execution model. Built on Firecracker MicroVMs, Lambda was designed as a high-speed “sprinter”.

AWS Lambda is a serverless compute service where you upload small pieces of code called functions, and AWS runs them for you automatically whenever something happens, like an HTTP request or a file upload. Unlike traditional servers or virtual machines that you have to provision, configure, and keep running 24/7, Lambda only runs your code when it’s needed. You don’t manage the operating system, patching, or capacity planning, because AWS handles all of that infrastructure for you, letting you focus purely on your application logic.

Before the recent Lambda updates, using Lambda typically meant navigating several standard limitations:

- The Single-Concurrency Rule: Every execution environment was dedicated to a single request. This led to frequent cold starts and made it difficult to share resources like database connection pools under heavy load.

- Ephemeral State: If a function was interrupted or timed out, all in-memory progress was lost.

- Synchronous Waiting: If a function had to wait for an external API, you remained “on the clock” for compute duration, even while the CPU sat idle.

Lambda Managed Instances: Serverless Ease, EC2 Power

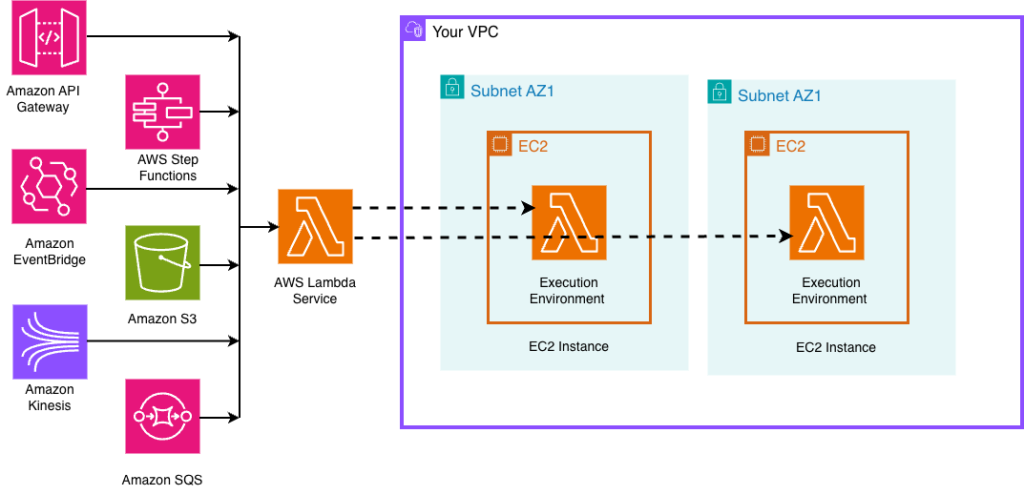

Lambda Managed Instances is a newer type of AWS Lambda offering where your functions still run like normal serverless code, but instead of using short-lived, shared environments, they run on dedicated EC2-based compute that AWS manages for you behind the scenes. This means you keep the simplicity of Lambda, no servers to manage, automatic scaling, and event-driven execution, while gaining more predictable performance, better cost control for steady workloads, and access to more powerful hardware options typically associated with EC2.

Managed Instances bridge the gap for teams that love the Lambda programming model but need the pricing and performance of dedicated EC2 hardware (like Graviton5).

Key Technical Advantages:

- Multi-Concurrency: Unlike standard Lambda, where one sandbox handles one request, a Lambda Managed Instance environment can handle multiple invocations simultaneously. This is a game-changer for I/O-heavy applications like web services, as it yields much better resource utilization. By handling multiple requests per instance, you’re not just saving on cold starts – you’re getting more ‘work’ out of every dollar spent on the underlying hardware.

- Predictable Scaling: By default, Lambda Managed Instances can absorb traffic spikes of up to 50% without needing to scale and can double its capacity every five minutes.

- 15-Minute Initialization: You now have a 15-minute window to load heavy libraries or machine learning models. Because the instances stay “warm,” this initialization cost is amortized across thousands of requests.

The Business Case: Because Lambda Managed Instances use EC2-based pricing (plus a 15% management fee), you can apply Reserved Instances and Savings Plans. For steady-state workloads, this can offer up to a 72% discount over standard on-demand pricing.

Lambda Durable Functions: Orchestration in Code

Lambda Durable Functions is a way to build long-running, multi-step workflows directly inside your Lambda code, where AWS automatically keeps track of progress, handles failures, and resumes execution when needed. Instead of writing separate orchestration logic or managing state yourself, you define your workflow in normal code, and Lambda “checkpoints” each step, pauses when waiting for things like APIs or human input, and continues later without losing progress, even if that takes minutes, days, or months.

Durable Functions solve the “state” problem for long-running processes. Whether you’re chaining AI prompts or waiting for a manager’s email approval, you can now write that logic as a single, continuous function.

How the “Magic” Works:

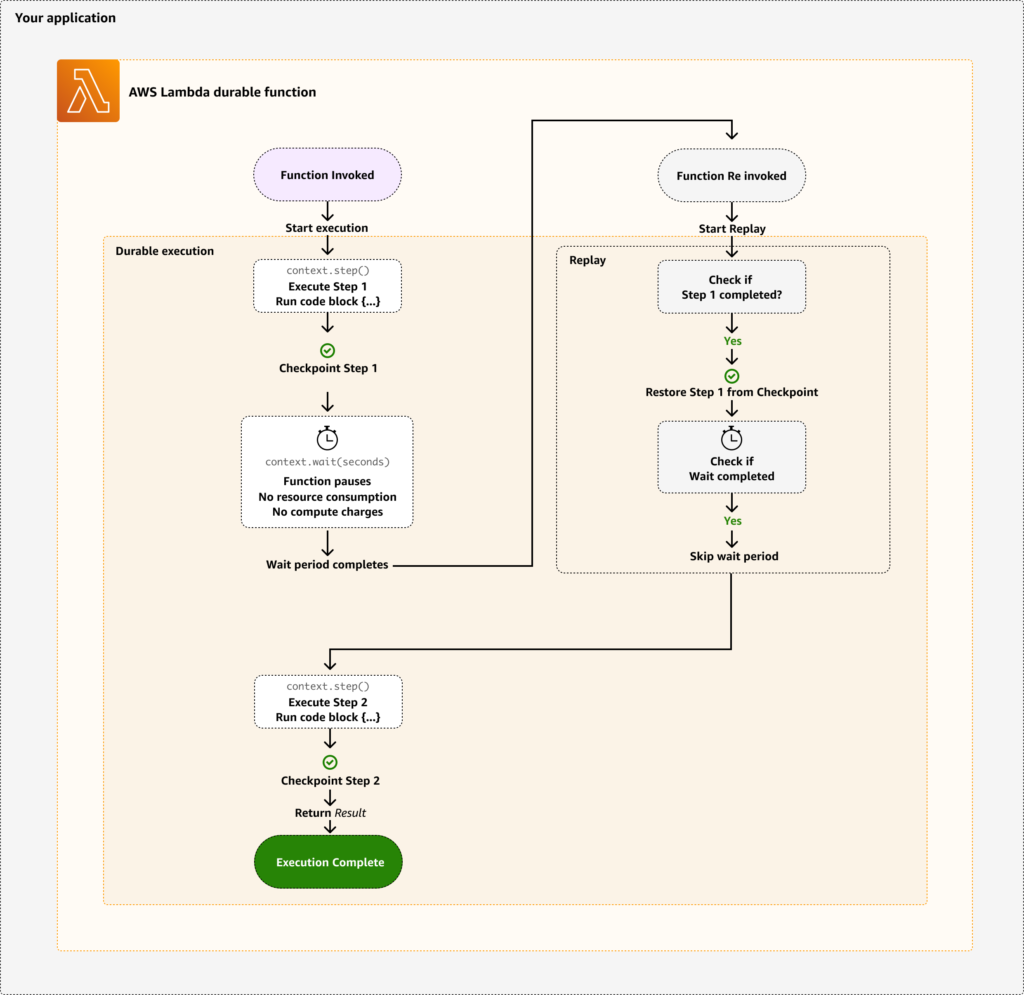

Durable Functions use a checkpoint-and-replay mechanism.

- Checkpointing: Every time your code completes a “step,” Lambda saves the result.

- Hibernation: When your code hits a “wait” (for a callback or a timer), the function shuts down. You stop paying for compute immediately.

- Replay: When the event arrives, Lambda restarts the function. It quickly “replays” the code, skipping over completed steps by using the stored results, and picks up exactly where it left off.

@durable_execution # enables checkpoint-and-replay

def lambda_handler(event, context: DurableContext):

# ① CHECKPOINT — result is saved to the state store.

order = context.step(

lambda _: validate_and_reserve(event["orderId"]),

name="validate-order",

)

# ② HIBERNATION — function exits; billing stops.

approval = context.wait_for_callback(

lambda callback_id: notify_approver(order, callback_id),

name="await-payment",

)

# ③ REPLAY — resumes here using stored results.

if not approval["approved"]:

return {"status": "declined"}

result = context.step(

lambda _: fulfill_order(order),

name="fulfill-order",

)

return {"status": "fulfilled", "tracking": result["trackingNumber"]}Because of the replay model, your workflow code must be deterministic, meaning non-deterministic operations like random values or current timestamps must be handled carefully.

When to use Durable Functions:

- Resilient Payments: Multi-step transactions where you need a guaranteed audit trail and automatic retries on failure.

- AI Agent Workflows: Chaining multiple model calls where some steps might take minutes to process.

- Human-in-the-Loop: Onboarding or approval flows that can span days or weeks.

Choosing Your Orchestrator: Durable vs. Step Functions

Lambda Durable Functions and Step Functions both handle workflow orchestration with built-in state management and failure recovery, but they differ in how you build and run those workflows: Durable Functions let you define multi-step workflows directly in your application code inside Lambda, keeping logic close to your business logic, while Step Functions is a separate AWS service where workflows are defined as JSON-based state machines with strong visual tooling and deep integrations across many AWS services.

| Feature | Durable Functions | Step Functions |

| Primary Method | Code (Python, Node.js) | ASL (JSON/YAML) or Visual Builder |

| Development | IDE-centric / Unit testable | Visual / Console-centric |

| Best For | Complex internal app logic | Multi-service orchestration |

The Decision Rule: Use Step Functions when you need a visual audit trail or are connecting various AWS services with minimal code. Use Durable Functions for logic-heavy workflows, nested loops, or when your development team prefers to stay within their local IDE environment.

How These Fit into a Modern Serverless Architecture

In 2026, a well-architected application rarely uses just one type of Lambda. Instead, you assign the right tool to the right job:

- Standard Lambda: Remains your “front-line” for bursty, unpredictable event-driven triggers (like S3 uploads or DynamoDB Streams). If you go from 0 to 1,000 requests in a second, the MicroVM architecture is still the fastest way to scale. It remains the best choice for small, fire-and-forget tasks like image resizing or log processing.

- Managed Instances: Becomes your “steady-state compute layer” for high-traffic APIs or background workers. If your function is constantly running, paying “per-request” is a tax you don’t need to pay. Lambda Managed Instances allows you to treat Lambda like a high-performance web server while keeping the serverless deployment workflow.

- Durable Functions: Acts as your “orchestration layer,” replacing complex “glue code”. Choose Durable Functions when the logic is more important than the speed. If you have a process that involves multiple steps, retries, or “wait states”, Durable Functions provide resilience. If the underlying hardware fails during step 5, the function simply resumes from the last checkpoint rather than restarting the whole process.

| Scenario | Recommendation |

| Highly unpredictable, bursty traffic | Standard Lambda |

| Steady-state traffic with high throughput | Managed Instances |

| Long-running logic with multiple “wait” steps | Durable Functions |

| Complex UI-heavy visual workflows | Step Functions |

Reference Architectures

Architecture isn’t just about where the code runs; it’s about how these components talk to each other. Here is how a 2026-ready stack looks in practice:

- High-Throughput API:

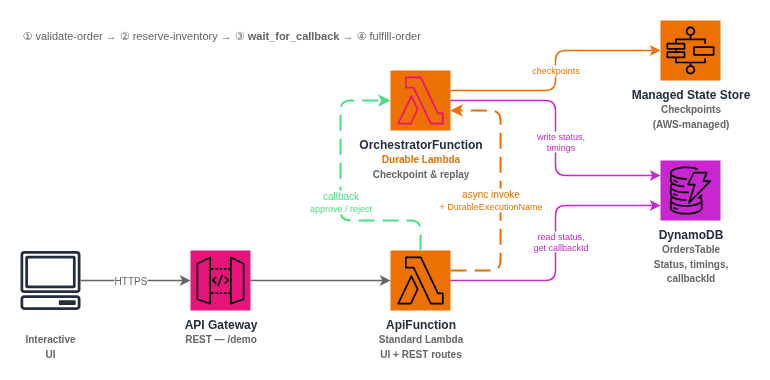

API Gateway→Lambda Managed Instance (running Graviton5)→RDS. This allows for persistent database connection pooling and significantly lower costs for steady traffic. - Order Processing Workflow:

SQS Trigger→Durable Function. The code runscheck_inventory(), pauses forcontext.wait_for_callback(payment_webhook), and resumes toship_item()days later. - AI Data Pipeline:

S3→Lambda Managed Instance (Heavy PDF OCR)→Durable Function (LLM summarization). Lambda Managed Instances handles the heavy initialization of the libraries, while Durable manages the multi-step AI logic.

Let’s Talk Money: Lambda in Production

The primary shift in architecture brings a move from simple duration-based billing to a more nuanced calculation of compute time vs. orchestration overhead.

Lambda Managed Instances: The Capacity Play

Pricing for Managed Instances is no longer about milliseconds; it’s about instance uptime. You are effectively leasing a managed slice of EC2.

- EC2 Instance Cost: You pay the standard hourly rate for the instance type you choose (C, M, or R families).

- 15% Management Fee: AWS charges a flat 15% premium on top of the EC2 on-demand price to handle the “serverless” management (patching, scaling, and routing).

- Request Fee: A flat $0.20 per 1 million requests, similar to standard Lambda.

- The Breakeven Point: Because you can apply 3-year Compute Savings Plans (which offer up to 72% discounts on the EC2 portion), Lambda Managed Instances become significantly cheaper than standard Lambda once your function’s average CPU utilization crosses 30%. If your function is “warm” and processing traffic continuously, you can save 40–60% compared to standard duration-based billing.

Lambda Durable Functions: The Orchestration Fee

With Durable Functions, you stop paying for idle time, but you introduce a small fee for the “brain” that tracks your progress.

- Orchestration Operations: You are charged $8.00 per 1 million durable operations (this includes every

checkpoint,step, andwaitcall). - State Storage:

- Data Written: $0.25 per GB written to the state store.

- Data Stored: $0.15 per GB-month for maintaining the workflow state (usually negligible unless you have millions of active, year-long workflows).

- The Logic: If your function spends 95% of its time in a

context.wait()state (e.g., waiting for a manager to approve a loan), your compute cost drops to near zero. You are effectively trading expensive CPU idle time for a tiny $8.00/million “management tax.”

Standard Lambda: The Default Sprinter

Standard Lambda remains on the same $0.0000166667 per GB-second model.

| Feature | Standard Lambda | Managed Instances | Durable Functions |

| Primary Cost | GB-seconds (Duration) | Hourly Instance Rate | $8.00/1M Ops + Duration |

| Commitment | N/A | Savings Plans / RIs | N/A |

| Waiting Cost | Full Price (Active wait) | Full Price (Instance idle) | $0.00 (Hibernation) |

| Management Fee | Included | 15% on Instance price | Included |

Disclaimer: Pricing reflected in this post is based on the US East (N. Virginia) region as of April 2026. AWS pricing varies by region and is subject to change. For production cost estimates, always refer to the official AWS Lambda Pricing Page or the AWS Pricing Calculator.

Get Started with Durable Functions: One-Click Deploy

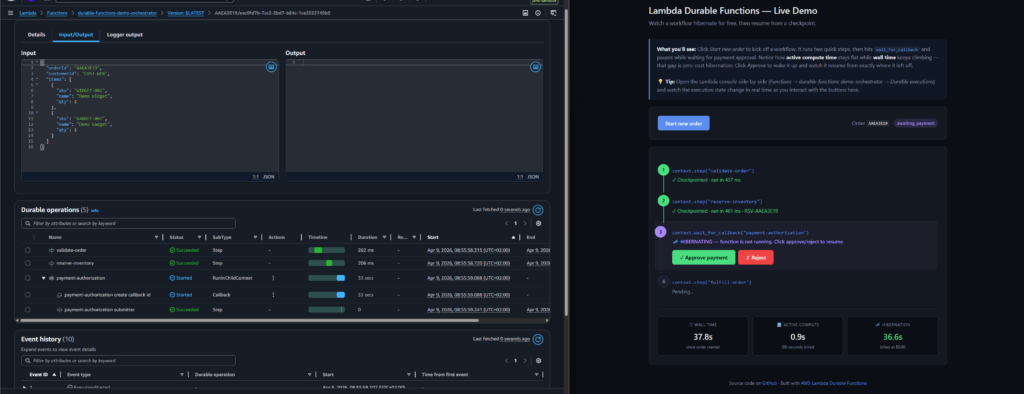

The best way to understand the checkpoint-and-replay model is to watch it happen. The interactive demo below deploys a real order processing workflow – the same pattern described in the reference architecture above – directly into your AWS account with a single click. No CLI, no code changes, no build tools required. CloudFormation provisions two Lambda functions, an API Gateway, and a DynamoDB table, then surfaces a URL to a live web UI.

The button below will allow a one-click deploy of the architecture. Once deployed, simply get the URL for the user interface from the Outputs tab in the durable-functions-demo stack.

In the user interface, click Start new order to kick off the workflow. You will watch two steps complete and checkpoint, then the function hits context.wait_for_callback() and goes dark. The UI’s metrics panel makes the core value proposition concrete: wall time keeps climbing while active compute stays flat. That gap – which can span seconds, hours, or days in production – is the hibernation period, billed at exactly $0.00. Click Approve payment to send the callback, and the orchestrator replays from its last checkpoint and runs the fulfillment step to completion without re-executing the work it already did. The full source code is available on GitHub.

Summary

The 2025 Lambda updates provide developers with more granular choices. Standard Lambda remains the best tool for bursty, event-driven triggers. Managed Instances provide the predictability needed for enterprise-scale APIs. Durable Functions allow us to write long-running, resilient logic without the overhead of external state management.

The question for 2026 is no longer about working around Lambda’s limits, but rather choosing the specific execution model that best fits your workload and budget.