Bedrock vs. SageMaker AI: A Strategic Guide for Startups (And Why You Might Need Neither)

The first wave of generative AI was about novelty. In 2026, that novelty has worn off. Most teams have already seen a model generate text, summarize documents, or answer questions.

The real question now is no longer “Can we build this?” but “How do we build this sustainably, at scale, and with a cost model the business can live with?”

If you’re a founder, product owner, or technical lead, you’re likely standing at an architectural fork in the road:

- Do you use a managed service like Amazon Bedrock and ship fast?

- Do you build and own your models using Amazon SageMaker AI?

- Or is a large language model simply the wrong tool for the job?

This guide cuts through the buzzwords. We’ll look at when to buy intelligence, when to build it, and when not to use generative AI at all – because in many cases, that third option is the smartest one. In other words, this is not a tooling decision – it is a business and operating-model decision.

TL;DR: The Infrastructure Cheat Sheet

When startups evaluate AWS AI services, the real decision is which layer of the AI stack to consume or own. On AWS, there are three distinct paths for building AI-powered products, each optimized for a different business constraint.

Amazon Bedrock – Managed Generative AI

Amazon Bedrock is the fastest way to add generative AI on AWS. It provides managed access to foundation models such as Claude, Llama, and Amazon Nova through a single API, with built-in scaling, security, and governance. Bedrock is ideal when speed, safety, and rapid iteration matter more than owning the underlying models. This is a good starting point for many startups and SaaS teams when adding AI features.

Amazon SageMaker – Custom Machine Learning and Model Ownership

Amazon SageMaker is AWS’s platform for teams that need to train, fine-tune, and operate their own machine learning models. It becomes important when startups need full control over training data, model weights, compliance boundaries, or long-term unit economics – in other words, when AI becomes a core business asset rather than a feature.

Purpose-Built AI and Amazon Q – When You Don’t Need Generative AI

For many business problems, generative AI is unnecessary. AWS provides purpose-built AI services such as Textract, Rekognition, Transcribe, and Translate that deliver reliable, low-cost results for document processing, speech recognition, image analysis, and translation. In addition, Amazon Q delivers embedded generative AI directly inside business workflows, eliminating the need to build or operate AI systems at all. In many real-world workflows, this “neither” option is not a compromise – it is often the most operationally sound choice.

Rule of thumb for startups:

- Use Amazon Bedrock when speed and iteration matter most.

- Use Amazon SageMaker when ownership, compliance, or cost control become strategic.

- Use purpose-built AWS AI services when the task is structured and repeatable.

Table of Contents

The Three Ways to Build AI on AWS in 2026

Before talking about trade-offs, it helps to understand the landscape.

In 2026, AWS does not offer “one AI platform.” It offers fundamentally different ways to introduce intelligence into a product – each optimized for a different level of ownership, control, and speed.

Understanding the Bigger Picture

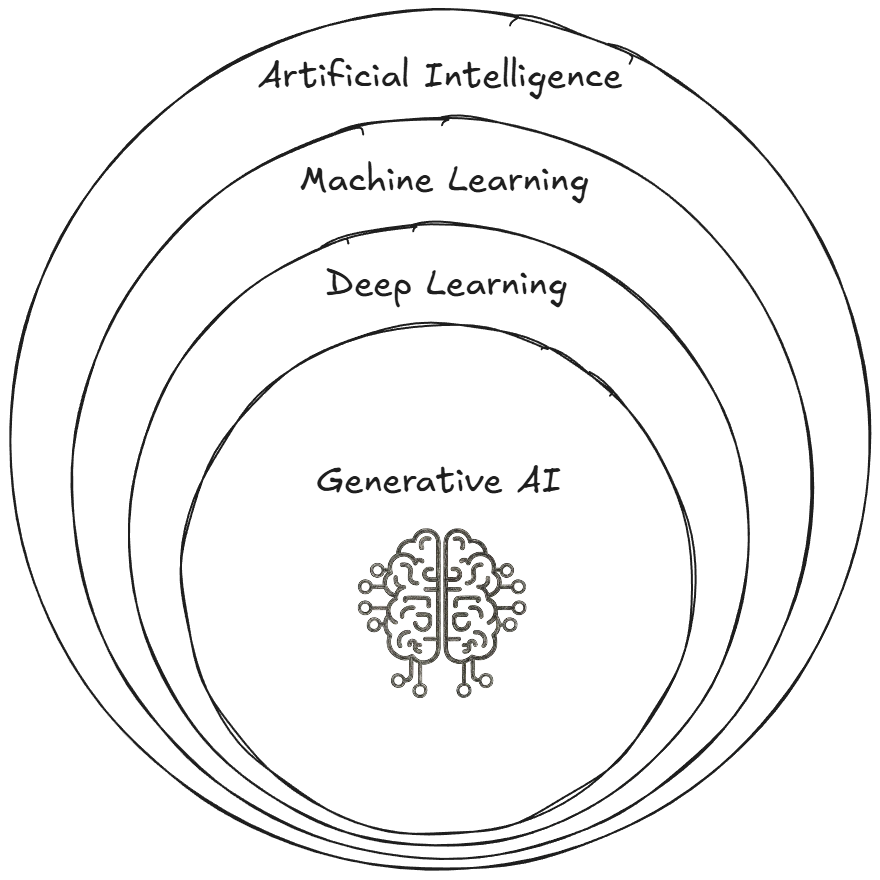

AI is more than just generative AI. The field of AI has existed for many decades, and over time specialized subsets have evolved, such as machine learning, deep learning, and generative AI.

The diagram above is not showing AWS services. It is showing the layers of intelligence that modern AI systems are built from.

The outer ring (AI) represents complete, finished capabilities – things like “read this document”, “analyze this image”, or “answer this question.” These are solved problems that can be consumed directly as APIs or applications.

The middle rings (Machine Learning and Deep Learning) represent the infrastructure layer of intelligence – models, training pipelines, and inference systems that teams must build, operate, and optimize if they want custom behavior, ownership, or lower unit costs.

The inner ring (Generative AI) is not a separate technology. It is a specific class of deep learning models that generate text, images, code, and other content. It sits on top of – and depends on – the ML and Deep Learning layers below it.

AWS services map directly onto these layers:

- Amazon Bedrock lives in the Generative AI layer. You consume foundation models and agent capabilities without owning or operating the ML stack beneath them.

- Amazon SageMaker lives in the Machine Learning and Deep Learning layers. This is where you train, fine-tune, deploy, and govern models that you own.

- Purpose-built AI services and Amazon Q (option 3 below) live in the AI layer. They expose finished intelligence (OCR, speech, vision, assistants) without exposing models or infrastructure at all. Although services like Textract, Translate, and Rekognition are built using machine learning internally, they are consumed as finished AI capabilities rather than as models or ML infrastructure, which is why they sit in the AI layer of this stack.

Let’s deep-dive into each category to better understand what is offered by the different AWS services.

Option 1: Amazon Bedrock – Consuming Intelligence

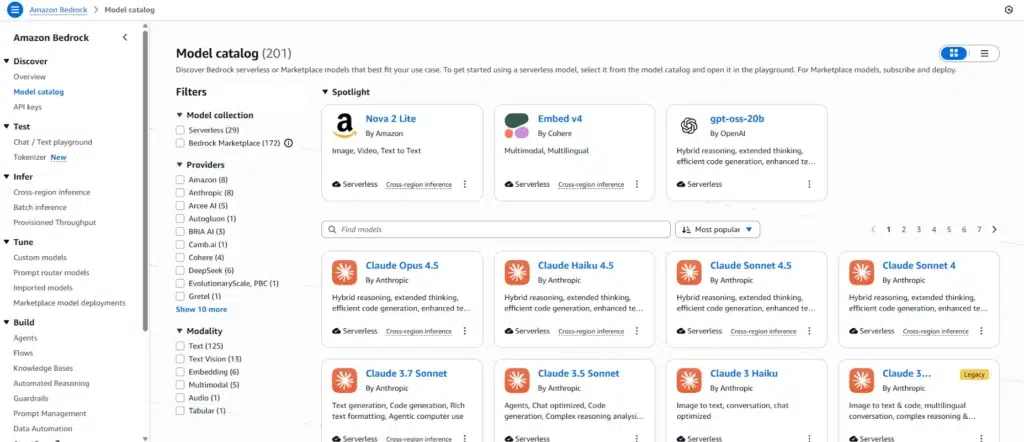

Amazon Bedrock represents AWS’s managed, consumption-based approach to Generative AI.

You do not train models, manage GPUs or scale infrastructure. Instead, you access foundation models from providers like Anthropic, Meta, and Amazon through a single, serverless API – and focus on what the AI does rather than how it runs.

In 2026, Bedrock includes far more than raw model access. It has evolved into a full application-layer AI platform, designed to operationalize intelligence safely and at scale.

At a high level, Bedrock provides the following application-layer capabilities:

- Foundation models (Claude, Llama, Amazon Nova, and others) through a unified API

- Bedrock Agents for declarative, goal-based workflows without custom orchestration

- Bedrock AgentCore for building code-driven, long-running AI agents with persistent memory and fine-grained control

- Knowledge Bases for Retrieval-Augmented Generation (RAG) over private data

- Guardrails for safety, compliance, and policy enforcement

- Multiple inference modes – including batch processing, cross-region inference, and provisioned throughput – to balance latency, availability, and cost

- Model extensibility, allowing teams to import custom models, deploy marketplace models, and route prompts across models without dropping down to SageMaker

- Built-in evaluation and reasoning capabilities for assessing model behavior

These features exist for one reason: to let teams build AI-powered products without owning or operating the ML stack.

Taken together, these capabilities make Bedrock best understood as an application-layer intelligence platform. You do not see GPUs, clusters, or model lifecycle plumbing because those concerns have been deliberately removed so teams can focus on product behavior, workflows, and user experience. Bedrock optimizes for speed, safety, and iteration by hiding complexity behind a managed layer, which is exactly what most early-stage teams need when they are still learning what to build. The question of ownership and deeper control only becomes relevant once AI has proven its value in production.

Option 2: Amazon SageMaker AI – Building and Owning Intelligence

Amazon SageMaker AI is AWS’s full-stack machine learning platform for teams that need to build, customize, and operate models they fully own. In practice, choosing SageMaker AI means choosing to run an ML platform, not just a model.

Quick note on naming

This is where you move from consuming intelligence to owning it end-to-end. You choose the training data, algorithms, model architectures, and deployment strategies, and you accept the responsibility of operating, scaling, and optimizing them over time.

SageMaker is not just a training service. It is a complete ML operating environment that spans experimentation, data preparation, deployment, governance, and human-in-the-loop workflows. Most startups will not start here, and that is intentional. SageMaker becomes relevant when ownership, unit economics, or regulatory constraints begin to outweigh speed.

At a high level, SageMaker provides:

- Model training and fine-tuning – from classic ML to large foundation models

- Managed development environments (Studio, notebooks, IDEs) for data science and ML teams

- Deployment and inference infrastructure, including real-time endpoints, batch inference, and deployment strategies like shadow testing

- MLOps tooling for pipelines, experiments, monitoring, and model governance

- Data preparation and labeling workflows, including Ground Truth and human review (A2I)

- Full VPC isolation and model ownership, ensuring data sovereignty and IP control

These capabilities give you all the building blocks for a highly customizable end-to-end owned AI pipeline.

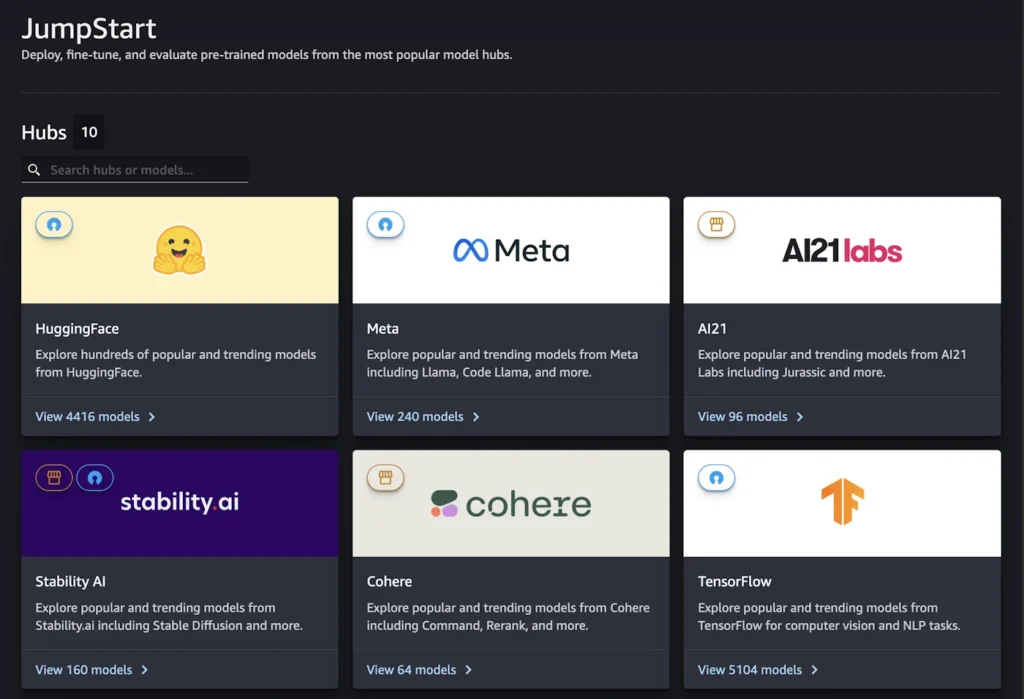

You Don’t Have to Start From Scratch

A common misconception is that choosing SageMaker means you are committing to training your own model from day one. In practice, many teams adopt SageMaker because they want more control over how models are deployed and governed, not because they want to become an AI research lab.

There are two common “fast starts” that matter for startups.

- SageMaker JumpStart gives you access to a model hub with pre-trained models and foundation models that you can deploy quickly, often with only a few lines of code using the SageMaker SDK. This is a practical way to run a known model inside your own AWS account with infrastructure you control.

- Hugging Face on SageMaker is the other common path. If your team is already evaluating open models, the Hugging Face integration lets you deploy models to SageMaker endpoints using the Hugging Face tooling and the SageMaker SDK. It’s important to note that some Hugging Face models are also available on Bedrock and via JumpStart in addition to the SageMaker SDK. If you’re using Hugging Face on SageMaker, you’re usually doing it for latency or compliance – you want that model sitting in your VPC, not living behind a public API endpoint.

These shortcuts reduce time to first model, but they do not remove the need to operate, monitor, and pay for the platform underneath.

Amazon SageMaker Unified Studio is the newest evolution of the SageMaker experience – a unified data, analytics, and AI development environment that lets teams access, prepare, model, and deploy data and AI workflows from a single interface. It integrates analytics tools, data discovery and governance, ML development, and generative AI application building, helping larger teams collaborate across data and AI domains without stitching together separate consoles.

Where Trainium and Inferentia Fit

SageMaker is also where Trainium and Inferentia come into play.

These are AWS-designed AI accelerator chips intended to reduce the cost of running machine-learning workloads at scale. Trainium is optimized for training large and custom models more cost-effectively than general-purpose GPUs. Inferentia is optimized for serving models at very high request volumes with predictable latency and lower per-request cost. For early-stage teams, they are an optimization, not a starting point.

Option 3: Neither – Embedded and Purpose-Built AI

Not every problem requires a foundation model – this third option is often overlooked.

In fact, many of the most common “AI” problems businesses face – reading documents, understanding speech, analyzing images, translating text – have been solved reliably for years using purpose-built AI services. These services are designed to do one job extremely well, with predictable behavior, low cost, and minimal operational overhead.

AWS offers a broad set of purpose-built AI capabilities out of the box.

Purpose-Built AI Services on AWS

At a high level, these services fall into a few clear categories:

Image and Video Analysis: “What’s in this image or video?”

Amazon Rekognition analyzes images and video to detect and classify what they contain. Typical use cases include:

- Object and label detection

- Facial detection and comparison

- Text detection in images

- Content moderation and safety checks

- People tracking and PPE detection in industrial environments

This is commonly used in security systems, media moderation, retail analytics, and compliance workflows.

Document Understanding: “What’s written in this document?”

Amazon Textract extracts structured information from documents, commonly known as Optical Character Recognition (OCR). Sources include PDFs, scanned images, invoices, ID documents, and forms. It can:

- Read typed and handwritten text

- Extract tables and form fields

- Recognize structured documents like financial reports or IDs

This is ideal for automating document-heavy workflows in finance, insurance, healthcare, and government without relying on a generative model to “interpret” content.

Text Analysis and NLP: “What does this text mean?”

Amazon Comprehend analyzes text to extract meaning and metadata, including:

- Entities (people, organizations, locations)

- Key phrases and topics

- Sentiment and targeted sentiment

- Language detection

- Custom classification and entity recognition

This is known as Natural Language Processing (NLP) and is commonly used for analytics, reporting, and compliance – where consistency matters more than creativity.

Speech and Audio: “What was said?”

Amazon Transcribe converts speech into text, either in real time or from recorded audio. It supports:

- Speaker identification

- Multi-channel audio

- Custom vocabularies

- Content filtering for privacy and compliance

This is widely used for call analytics, meeting transcripts, media workflows, and accessibility.

Text-to-Speech: “Say this out loud.”

Amazon Polly converts text into natural-sounding speech using neural voices across multiple languages and speaking styles. Typical uses include:

- Voice assistants

- Accessibility features

- News narration

- Automated announcements

Translation – “Hello -> Olá”

Amazon Translate performs fast, high-quality translation of unstructured text between languages, often embedded directly into applications and content pipelines.

Services like Rekognition, Textract, Comprehend, Transcribe, Polly and Translate exist because many business problems do not require probabilistic reasoning or generative models at all.

Embedded AI: The Amazon Q Ecosystem

AWS also offers embedded AI experiences – ready-to-use generative assistants and tools that deliver intelligence directly in workflows without you having to build or manage models or infrastructure. The core components of the Amazon Q ecosystem include:

- Amazon Q Business – A generative AI assistant for enterprise users that answers questions, provides summaries, generates content, and completes tasks based on your company’s internal data and systems.

- Amazon Q Apps – A feature within Q Business that lets users turn natural language prompts into lightweight, reusable AI apps tailored to specific tasks or workflows (e.g., generating reports or automating simple processes).

- Integrated Q Experiences – Amazon Q capabilities embedded directly into AWS services like analytics dashboards and support platforms, allowing users to access AI assistance where they already work.

Embedded AI like Amazon Q is consumed directly by people inside tools and workflows rather than built into systems by engineers. It doesn’t require you to design models, build pipelines, or operate infrastructure – unlike Bedrock or SageMaker. In essence, Q is AI you use, not AI you build.

Bedrock vs. SageMaker vs. Neither – It’s a Business Decision

At a glance, the choice between Amazon Bedrock, Amazon SageMaker and other specialized services looks like a technology decision. In practice, it is usually a business decision disguised as an architectural one.

All services are production-grade and can run serious workloads. The real difference is what you are optimizing for, and what you are willing to own.

What You’re Actually Paying For

With Amazon Bedrock, you are paying for speed and focus. You deliberately outsource the most painful parts of operating generative AI systems – GPU capacity, scaling behavior, model lifecycle management, and safety guardrails – so your team can concentrate on product behavior, workflows, and user experience.

With Amazon SageMaker AI, you are paying with effort in exchange for control. You take ownership of the plumbing: training jobs, inference endpoints, container images, deployment patterns, monitoring, and cost tuning. The reward is that you can freeze model weights, standardize runtimes, isolate workloads inside your VPC, and optimize unit economics when AI becomes a meaningful cost center.

With purpose-built AI services and embedded tools like Textract, Rekognition and Amazon Q, you are paying for determinism. You trade flexibility for predictable outputs, stable schemas, and linear pricing, which makes these services ideal for structured, repeatable business workflows like document processing, transcription, classification, and translation.

None of these choices is “better.” Each simply moves complexity and responsibility to a different place in your system.

How AWS Billing Works Across the Three Options

Pricing follows the same abstraction boundaries as the technology. With Amazon Bedrock, you pay per token and per request, which makes it easy to start but harder to forecast as usage grows if controls are not in place. With Amazon SageMaker, you pay for infrastructure – instances, storage, and throughput – which produces a more predictable cost curve but requires active engineering and capacity management. With purpose-built AI services and embedded tools like Amazon Q, pricing is usually linear and unit-based (per page, per minute, per image, or per request), which makes these services the easiest to budget and forecast for structured workloads.

The important question is not which option is “cheapest,” but which one produces a cost curve your business can live with as AI usage scales.

The “Team DNA” Question

The hidden reason this decision feels like a fork in the road is that it changes what your team spends their day doing.

A Bedrock-first team behaves like product engineers working with AI. They focus on prompts, workflows, agents, and tool integrations, shaping how intelligence appears to users.

A SageMaker-first team behaves like an ML platform team. They spend time on model lifecycle management, performance and latency tuning, deployment pipelines, and keeping costs and reliability under control.

A team that relies on purpose-built and embedded AI behaves like an application and data-integration team. They spend their time wiring deterministic AI capabilities into business processes, building validation, auditing, and downstream logic rather than tuning models.

The Startup Decision Matrix (When to Choose What)

Most teams do not fail because they picked the wrong service. They fail because they picked the right service too early, before they had a clear reason to carry the operational load.

Bedrock – When Speed is the Constraint

For early-stage startups, Bedrock is usually the fastest path to something real. If your next milestone is product-market fit, you want a platform that minimizes time spent on infrastructure and maximizes time spent learning from users.

Bedrock is a strong default when AI is a feature, not your core IP, and when you want to ship quickly without building a dedicated ML platform. It also helps when you need safety, governance, and model access packaged as a managed layer rather than a bespoke system.

If your main question is “Can we deliver value in weeks, not quarters?”, Bedrock tends to be the right answer.

SageMaker – When Ownership and Unit Economics Become the Constraint

SageMaker becomes relevant when the problem shifts. This usually happens later, when AI is no longer a prototype feature, but a production dependency with cost and risk attached to it.

Teams reach for SageMaker when they need stronger ownership guarantees, when they want the ability to freeze models and environments for long periods, or when they need deep customization through fine-tuning and training. It also becomes relevant when usage volume makes cost per request impossible to ignore.

SageMaker is not an upgrade you “eventually do”. It is a different operating model. The right time to choose it is when the business has a clear reason to own the overhead.

Neither – When Determinism Beats Flexibility

A foundation model is a powerful tool, but it is not always the correct tool. If the task is structured, repetitive, and needs consistent outcomes, purpose-built services often win on cost and reliability.

For example, extracting fields from invoices, transcribing audio, labeling images, moderating content, and classifying text have been solved reliably for years with specialized AI services. Those services are built to be predictable, auditable, and cheap at scale.

Quick Reference – Bedrock vs SageMaker vs Other

To recap the trade-offs and differences between the three paths to AI on AWS, look at the following table:

| Dimension | Amazon Bedrock (Consume) | Amazon SageMaker (Own) | Neither (Purpose-built or embedded) |

|---|---|---|---|

| Best for | Shipping generative AI features fast | Owning models and optimizing at scale | Structured tasks that need predictability |

| Time to first value | Hours to days | Days to weeks (often longer) | Hours to days |

| What you manage | Prompts, workflows, tools, guardrails | Training, endpoints, containers, MLOps | API integration and downstream logic |

| Cost model | Pay-per-token, plus any retrieval costs | Pay for instances and storage, plus ops time | Usually per page, per minute, or per request |

| Biggest risk | Token sprawl and unpredictable usage | Operational overhead becomes the bottleneck | Limited flexibility outside the target task |

| Typical startup moment | MVP, early traction, rapid iteration | Growth stage, high volume, regulated, proprietary data | Any stage, especially when tasks are deterministic |

| Skills profile | Product engineering and integration | ML platform and operations | Application integration and data pipelines |

This table also hints at something important.

Most strong teams do not “pick one” and stay there. They mix these approaches on purpose. Bedrock often powers the user-facing reasoning layer, purpose-built services handle extraction and classification, and SageMaker is introduced only for the slice of workloads where ownership pays for itself.

A Practical Example – Invoice Extraction

Invoice extraction is a workflow many startups in the fintech industry face. You need to reliably extract fields like supplier name, invoice number, dates, and totals, often at scale and as part of a finance pipeline.

At this point, many teams reach for a foundation model because it feels universal. You can solve the problem that way, but it is rarely the most robust approach.

Two Ways to Solve the Same Task

Using a foundation model (via Amazon Bedrock)

You pass the invoice text to a model and ask it to return a structured response. This approach is flexible and can work well, especially when document formats vary.

The trade-off is that behavior is probabilistic. Output formats can drift, retries can produce different results, and confidence is implicit rather than explicit. As the workflow grows, teams often add prompt constraints, validation passes, and repair logic to keep results usable.

Using a purpose-built service (Amazon Textract)

Textract’s Analyze Expense API is designed specifically for invoices and receipts. It returns structured fields with confidence scores, using models trained for financial documents.

The output schema is stable and failure modes are explicit. Costs scale linearly with page count rather than prompt complexity.

Why Deterministic Behavior Matters More Than Intelligence

For invoice extraction, the problem is not whether the model understands the document.

The real problem is whether the system behaves predictably when something goes wrong.

Finance workflows depend on consistent fields, known confidence levels, and repeatable results. Deterministic services make it easier to build validation, auditing, and fallback paths without growing complexity elsewhere in the system.

This is why many mature architectures follow a hybrid pattern:

- Use Textract for extraction and confidence scoring

- Use foundation models for reasoning, summarization, or anomaly explanation

The result is a system that is easier to operate, easier to budget, and easier to trust.

From PoC to Product (The Boring Checklist)

Most AI prototypes fail for the same reason: the team validated that a model works, but never validated that the system around the model works. The moment real users arrive, every layer of the AI stack – whether it is Bedrock, SageMaker, or a purpose-built service – starts behaving like production infrastructure instead of a demo.

The failures are rarely about model quality. They are about operational reality: token limits, throttling, request bursts, permission boundaries, and costs that grow faster than the rest of the architecture. A Bedrock-based system hits guardrail and quota limits. A SageMaker deployment hits scaling and cost walls. A document pipeline built on Textract and Transcribe hits throughput and workflow orchestration issues.

Turning a proof-of-concept into a product means treating AI exactly like any other production dependency. You need to make failures explicit, budget consumption visible, and behavior observable. That means retries and fallbacks, request and cost monitoring, and guardrails that prevent AI from producing outputs you cannot ship to customers.

AWS’s managed services make this easier – but only if you design for production from day one. Otherwise, you are not testing an AI product, but only testing a demo.

Final Thoughts

The Bedrock vs. SageMaker question is not about which service is “better.” It is about choosing the right level of abstraction and ownership for each problem you are trying to solve. In many cases, the best option is not to use a foundation model at all. Purpose-built AI services and embedded tools like Amazon Q already solve large classes of business problems with greater predictability, lower cost, and far less operational complexity.

When generative AI is the right tool, Amazon Bedrock allows teams to move quickly by consuming models and guardrails as a managed layer, so they can focus on product behavior and user experience instead of infrastructure. Amazon SageMaker becomes relevant only when AI turns into a core business asset that justifies owning model weights, training pipelines, and long-term unit economics.

Most teams do not struggle with AI because the models are wrong. They struggle because they choose the wrong layer of the stack to own for the job at hand. The best AI architecture is the one that delivers a real product outcome, with a cost model you understand and an operating model your team can sustain.