Stop Fine-Tuning: Why RAG on AWS is the Fastest Path to Production-Ready GenAI

When companies start building generative AI solutions, the first instinct is almost always the same: “Let’s fine-tune the model.”

It sounds logical – you have proprietary data, domain-specific terminology, and internal workflows, so surely the model needs additional training to understand your business.

In practice, fine-tuning is rarely the fastest path to production. It introduces infrastructure complexity, retraining cycles, governance overhead, and longer iteration times – often before you’ve even validated the real problem.

In most production scenarios, the bottleneck isn’t model intelligence. It’s access to the right context at the right time.

That is why Retrieval-Augmented Generation (RAG), especially when implemented with managed services on AWS, is usually the more practical, lower-risk, and faster route to production-ready generative AI (GenAI).

TL;DR: Executive Summary

If you are building production GenAI, start with RAG, not fine-tuning.

Fine-tuning modifies model weights, while RAG changes how the model accesses knowledge. For most organizations, the challenge is not improving reasoning – it is grounding responses in proprietary, up-to-date information.

RAG on AWS allows you to deploy faster, update knowledge without retraining, reduce MLOps overhead, and maintain clearer governance boundaries.

Fine-tuning has its place, but it should be a deliberate optimization step, not the default starting point.

Table of Contents

What RAG Changes

RAG is not a new type of model – it is an architectural pattern.

Instead of embedding knowledge directly into model weights, RAG retrieves relevant information at runtime and injects it into the prompt before generation. The model remains general-purpose and your company knowledge remains external.

Let’s brush up on some fundamentals before going ahead.

At a high level, the RAG flow is straightforward:

- Documents are chunked and converted into embeddings, numerical representations of their meaning.

- Embeddings are then stored in a vector index, a place to store and search embeddings.

- A user query is embedded (also translated into numbers) and matched against that index.

- The most relevant content is inserted into the prompt.

- The model generates a response grounded in that context.

Fine-tuning changes the model itself. RAG changes the data pipeline around it. This is an important distinction to remember.

Fine-tuned models operate like students taking a closed-book exam – it is trained on data and stores it internally. RAG systems operate like open-book exams – the model consults approved reference material before answering.

In environments where knowledge changes frequently, open-book systems are more practical and more reliable.

The Fine-Tuning Trap

Fine-tuning is not inherently wrong. It is simply often premature or overkill.

Teams reach for fine-tuning because it feels like customization. If responses are generic, retrain. If terminology is off, retrain. If outputs are inconsistent, retrain.

The problem is that fine-tuning solves behavioral adaptation, not knowledge grounding. When the real issue is missing or poorly structured context, retraining the model adds cost without addressing the root cause.

Fine-tuning introduces structural commitments. You need curated training datasets, GPU-backed training jobs, evaluation pipelines, version management, and lifecycle governance. Knowledge updates require retraining cycles. Foundation model upgrades require regression testing. Operational scope expands from application engineering into full MLOps.

For organizations whose documentation changes weekly or whose policies evolve regularly, this becomes friction. What began as acceleration becomes overhead.

If your objective is grounding responses in proprietary information, fine-tuning is often solving the wrong layer of the problem.

RAG on AWS: A Production-Ready Architecture

RAG becomes powerful when deployed on infrastructure designed for scale, governance, and security. On AWS, the architecture is modular and fully managed.

In a production-ready RAG architecture on AWS, the core components are:

- Foundation model for generation

- Embedding model for semantic representation

- Vector index for similarity search

- Retrieval orchestration layer

- Guardrails and governance controls

- IAM and network isolation

On AWS specifically, this typically maps to:

- Generation model via Amazon Bedrock, for example Amazon Nova Lite or Amazon Nova 2 Lite

- Embedding model via Amazon Bedrock, for example Amazon Titan Embeddings

- Vector storage using Amazon S3 Vectors or Amazon OpenSearch Service

- Retrieval orchestration using Knowledge Bases for Amazon Bedrock

- Governance and security using Guardrails, IAM, VPC endpoints, and logging

Amazon Bedrock provides unified access to foundation models without requiring infrastructure management. Knowledge Bases handle ingestion, embedding generation, indexing, and retrieval orchestration.

For vector storage, you have two primary options.

- Amazon OpenSearch Service provides scalable semantic search and supports advanced retrieval patterns such as hybrid search. It is well suited for high-query workloads and applications requiring more complex search logic.

- Amazon S3 Vectors introduces vector-native storage directly within S3. It allows embeddings to be stored alongside source objects and supports semantic search without provisioning a separate search cluster. For many RAG workloads, especially document-heavy knowledge bases, this dramatically simplifies architecture and reduces cost.

The architectural principle remains the same: knowledge stays external, searchable, and independently governable from the model.

Guardrails in Bedrock enable content filtering, topic control, and response constraints. IAM roles enforce least privilege. VPC endpoints can isolate traffic. Logging through CloudWatch enables observability.

This is not a prototype pattern. It is a production-ready architecture built entirely on managed services.

From Question to Answer: How the Architecture Flows

Understanding the execution path clarifies why RAG scales well.

The RAG process begins with ingestion. Documents are parsed, chunked into semantically coherent segments, cleaned, and enriched with metadata. Good chunking strategy matters. Overly large chunks reduce precision. Arbitrary splits degrade coherence.

There are several chunking strategies for Bedrock Knowledge Bases, including standard, hierarchical, semantic, and multimodal. You can read more about it in the Bedrock User Guide.

Each chunk is converted into an embedding using a managed embedding model accessed through Bedrock. These embeddings are stored in S3 Vectors or OpenSearch.

When a user submits a question, the system generates a query embedding and performs a similarity search. Metadata filters can restrict results by department, classification level, or geography before generation begins.

The retrieved segments are then assembled into a structured prompt. Redundant passages are trimmed. Token limits are respected. Instructions constrain the model to answer using only the provided material.

The model generates a grounded response. Post-processing may include confidence scoring, formatting normalization, or policy validation.

Every stage can be logged: retrieved document IDs, similarity scores, prompt templates, response latency. This observability makes the system diagnosable.

Unlike fine-tuned systems, behavior is not buried in weights. It is visible in the pipeline.

When Fine-Tuning Actually Makes Sense

Fine-tuning makes sense when the objective is to modify reasoning behavior, not inject knowledge.

- If your organization relies on proprietary decision frameworks, structured reasoning pipelines, or highly specialized transformation logic that cannot be reliably enforced through prompts alone, weight adaptation may be justified.

- Extreme domain specialization can also warrant fine-tuning. If retrieval quality is strong yet outputs consistently lack domain fluency, the limitation may lie in the model’s internal representation rather than context availability.

- Latency-sensitive edge deployments may favor compact fine-tuned models to reduce runtime dependencies.

- Certain regulatory environments may prefer versioned, certified model artifacts with frozen behavior for audit purposes.

The key is alignment. Use fine-tuning to shape behavior. Use RAG to supply knowledge.

RAG vs Fine-Tuning: A Decision Framework

Choosing between RAG and fine-tuning is a business decision.

Speed to MVP

RAG typically wins. There are no training cycles or weight validation loops. The critical path is integration and retrieval quality.

Cost Over 12 Months

Fine-tuning introduces data preparation effort, training compute, model hosting, and retraining costs. RAG shifts spending toward inference and retrieval infrastructure, with no retraining when documents change.

Maintenance Overhead

Fine-tuned models require lifecycle management, drift monitoring, and compatibility validation. RAG architectures are modular. Retrieval, storage, and generation layers evolve independently.

Long-Term Advantage

Fine-tuning can embed proprietary reasoning patterns. RAG strengthens institutional knowledge by making documentation structured, searchable, and operational.

In most production contexts, the durable advantage lies in knowledge quality and governance, not custom model weights.

| Dimension | RAG | Fine-Tuning |

|---|---|---|

| Primary Goal | Ground responses in external knowledge | Modify model behavior or reasoning |

| Time to MVP | Fast, integration-driven | Slower, training-driven |

| Knowledge Updates | Instant via re-indexing | Requires retraining |

| Upfront Investment | Moderate engineering effort | High data and training effort |

| Ongoing Costs | Inference + retrieval infrastructure | Inference + training + model lifecycle |

| Operational Complexity | Modular and diagnosable | Requires MLOps discipline |

| Best For | Knowledge-heavy production use cases | Behavioral adaptation and specialized reasoning |

| Risk Profile | Lower structural commitment | Long-term architectural commitment |

Deploy a RAG Agent in Minutes

Architectural theory is useful. Deployment speed is decisive.

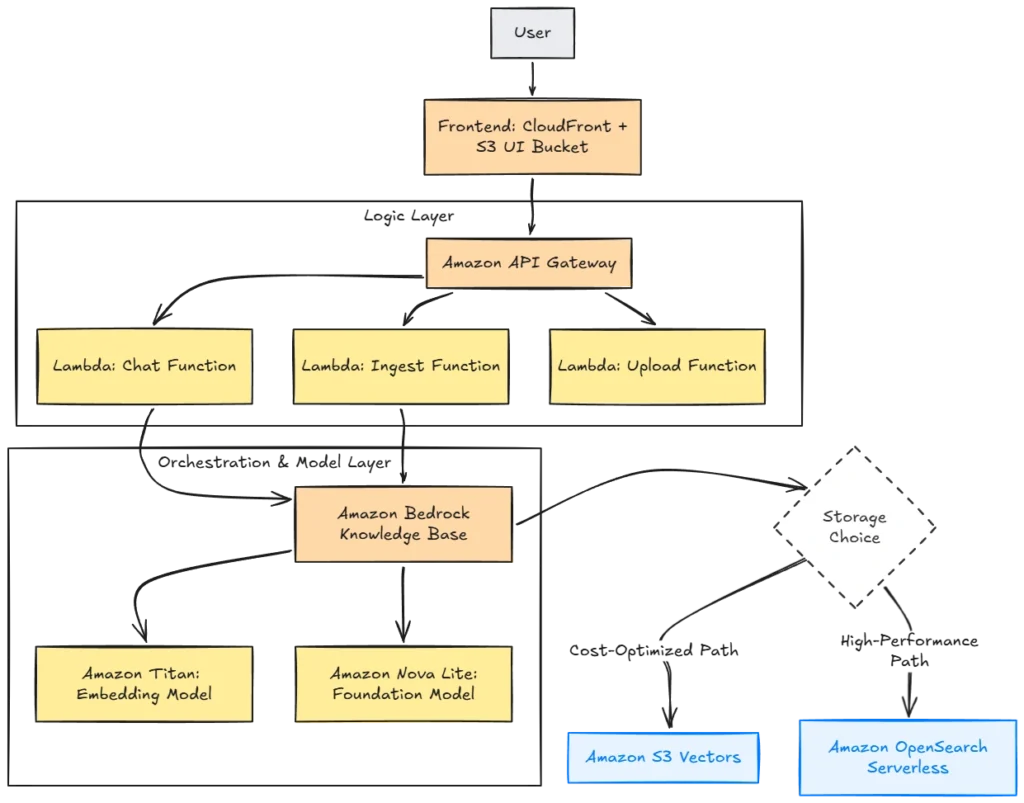

We built a one-click CloudFormation stack that provisions a complete, serverless Bedrock RAG agent in under ten minutes.

The stack deploys everything you need to get started with RAG on AWS:

- S3 and CloudFront for the frontend

- API Gateway and Lambda for application logic

- Bedrock Knowledge Base for retrieval

- Amazon Nova Lite for generation

- Vector storage using S3 Vectors or OpenSearch Serverless

- IAM roles with least privilege

The S3 Vectors option provides a significantly lower-cost configuration, roughly $0.6 per day in the eu-central-1 region for moderate usage. OpenSearch provides higher throughput and advanced search capabilities at a higher cost profile.

The architecture is fully serverless – specifically the S3 Vectors path, since it eliminates the idle cluster cost of OpenSearch. There are no EC2 instances, no container clusters, and no infrastructure to patch. This makes it a great template for startups wanting to set up a RAG pipeline on AWS.

Production hardening typically adds VPC endpoints, WAF, centralized logging, CI/CD, and automated ingestion pipelines. The core design remains intact.

Infrastructure should not be the bottleneck in GenAI adoption – AWS managed services make RAG easy and reliable.

Common Pitfalls in RAG Implementations

RAG fails when implementation discipline fails.

Poor chunking strategy degrades retrieval precision. Embedding mismatches reduce similarity accuracy. Overloading the context window increases latency and dilutes relevance. Ignoring observability turns debugging into guesswork.

Retrieval quality must be validated before optimizing generation. The embedding model must be consistent for both ingestion and queries. Context assembly must respect token budgets and remove redundancy.

RAG is not plug-and-play. It is an architectural pattern that rewards disciplined execution.

Final Thoughts: Build Fast, Optimize Later

Production GenAI initiatives rarely fail because models are too weak. They fail because teams introduce complexity before validating value.

RAG provides a disciplined starting point. It grounds responses in proprietary knowledge, reduces operational risk, and accelerates deployment without long-term architectural commitments.

Fine-tune only when you have clear evidence that behavior, not context, is the constraint.

In most cases, the fastest path to production-ready GenAI is not changing the model. It is designing the system around it correctly.